之前的篇章都是用SemanticKernel来连接OpenAI的API,当然是需要费用,另外还有使用限制,本篇来说明在SK中使用开源模型LLama3。

首先引入Nuget包,这里使用的是LLamaSharp这个三方包,因为没有显卡,只能跑在CPU上,所以也需要引入对应的Cpu包,最后引入SK的LLama版的包。

<ItemGroup><PackageReferenceInclude="LLamaSharp"Version="0.11.2" /><PackageReferenceInclude="LLamaSharp.Backend.Cpu"Version="0.11.2" /><PackageReferenceInclude="LLamaSharp.semantic-kernel"Version="0.11.2" /></ItemGroup>

接下就是下载最新的LLama3了,扩展名是gguf,如下代码就可以轻松地跑起本地小模型了。

using LLama.Common;using LLama;using LLamaSharp.SemanticKernel.ChatCompletion;using System.Text;using ChatHistory = LLama.Common.ChatHistory;using AuthorRole = LLama.Common.AuthorRole;await SKRunAsync();async Task SKRunAsync(){var modelPath = @"C:\llama\llama-2-coder-7b.Q8_0.gguf";var parameters = new ModelParams(modelPath) { ContextSize = 1024, Seed = 1337, GpuLayerCount = 5, Encoding = Encoding.UTF8, };usingvar model = LLamaWeights.LoadFromFile(parameters);var ex = new StatelessExecutor(model, parameters);var chatGPT = new LLamaSharpChatCompletion(ex);var chatHistory = chatGPT.CreateNewChat(@"这是assistant和user之间的对话。assistant是一名.net和C#专家,能准确回答user提出的专业问题。"); Console.WriteLine("开始聊天:"); Console.WriteLine("------------------------");while (true) { Console.Write("user:");var userMessage = Console.ReadLine(); chatHistory.AddUserMessage(userMessage);var first = true;var content = "";awaitforeach (var reply in chatGPT.GetStreamingChatMessageContentsAsync(chatHistory)) {if (first) { first = false; Console.Write(reply.Role + ":"); } content += reply.Content; Console.Write(reply.Content); } chatHistory.AddAssistantMessage(content); }}

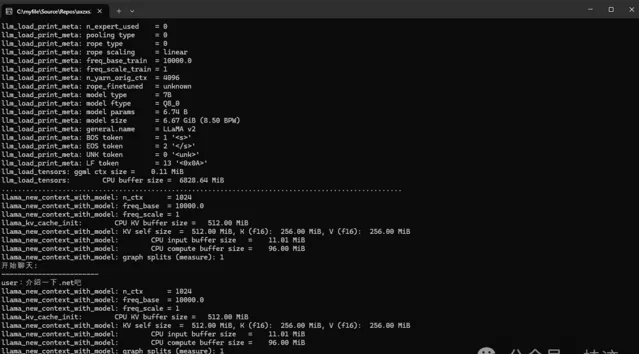

下面是具体的效果,除了慢点,没有GPT强大点,其他都是很香的,关键是没有key,轻松跑,不怕信用卡超支。