本篇給大家帶來一套輕量級的kubernetes日誌收集方案的相關介紹。我自己也在生產環境中使用過這套方案,令我意想不到的時它占用的kubernetes的資源相比與ELK這套方案真的是小巫見大巫。那接下來就跟隨這篇文章開始學習它吧……

1、為什麽要使用Loki

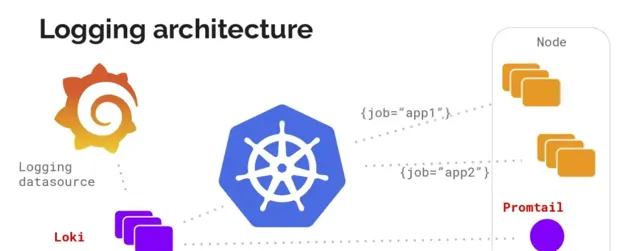

這篇文章著重介紹了grafana公司開發的loki日誌收集套用。Loki是一個輕量級的日誌收集、分析的套用,采用的是promtail的方式來獲取日誌內容並送到loki裏面進行儲存,最終在grafana的datasource裏面添加資料來源進行日誌的展示、查詢。

loki的持久化儲存支持azure、gcs、s3、swift、local這5中型別,其中常用的是s3、local。另外,它還支持很多種日誌搜集型別,像最常用的logstash、fluentbit也在官方支持的列表中。

那它有哪些優點呢?

支持的客戶端,如Promtail,Fluentbit,Fluentd,Vector,Logstash和Grafana Agent

首選代理Promtail,可以多來源提取日誌,包括本地日誌檔,systemd,Windows事件日誌,Docker日誌記錄驅動程式等

沒有日誌格式要求,包括JSON,XML,CSV,logfmt,非結構化文本

使用與查詢指標相同的語法查詢日誌

日誌查詢時允許動態篩選和轉換日誌行

可以輕松地計算日誌中的需要的指標

引入時的最小索引意味著您可以在查詢時動態地對日誌進行切片和切塊,以便在出現新問題時回答它們

雲原生支持,使用Prometheus形式抓取數據

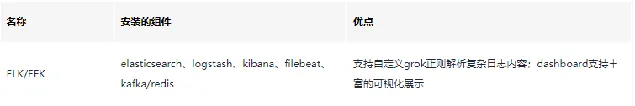

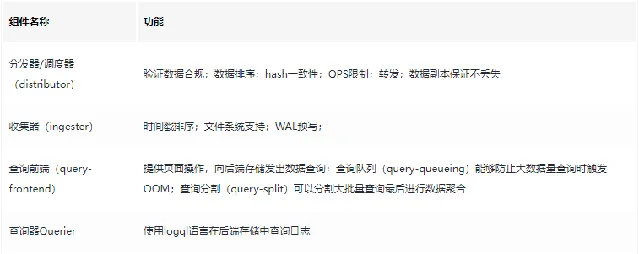

各日誌收集元件簡單對比

2、Loki工作方式解惑

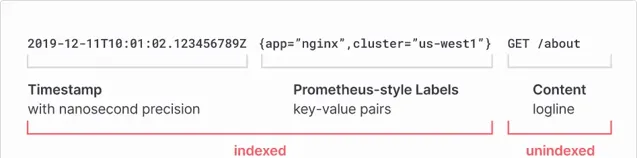

日誌解析格式

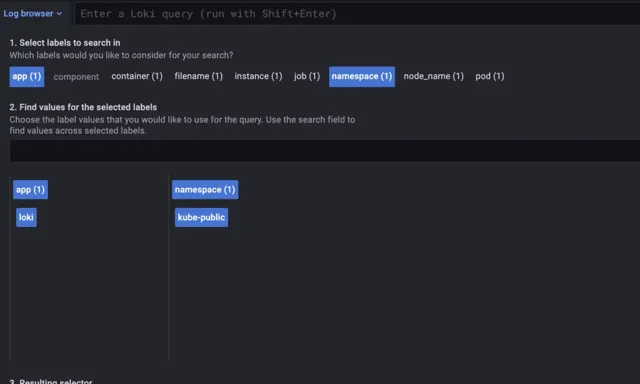

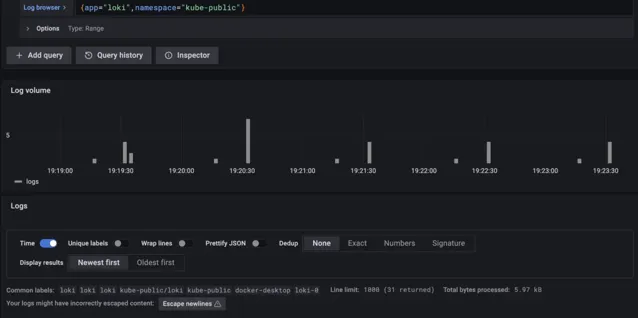

從上面的圖中我們可以看到,它在解析日誌的時候是以index為主的,index包括時間戳和pod的部份label(其他label為filename、containers等),其余的是日誌內容。具體查詢效果如下:

{app="loki",namespace="kube-public"}

為索引

日誌搜集架構模式

在使用過程中,官方推薦使用promtail做為agent以DaemonSet方式部署在kubernetes的worker節點上搜集日誌。另外也可以用上面提到的其他日誌收集工具來收取,這篇文章在結尾處會附上其他工具的配置方式。

Loki部署模式都有哪些呢

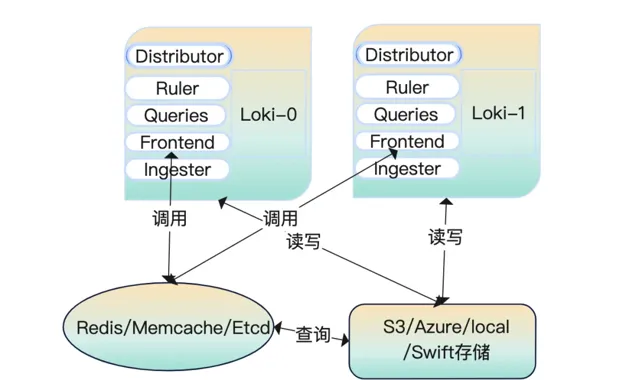

Loki由許多元件微服務構建而成,微服務元件有5個。在這5個裏面添加緩存用來把數據放起來加快查詢。數據放在共享儲存裏面配置

memberlist_config

部份並在例項之間共享狀態,將Loki進行無限橫向擴充套件。

在配置完

memberlist_config

部份後采用輪詢的方式尋找數據。為了使用方便官方把所有的微服務編譯成一個二進制,可以透過命令列參數

-target

控制,支持all、read、write,我們在部署時根據日誌量的大小可以指定不同模式

all(讀寫模式)

服務啟動後,我們做的數據查詢、數據寫入都是來自這一個節點。請看下面的這個圖解:

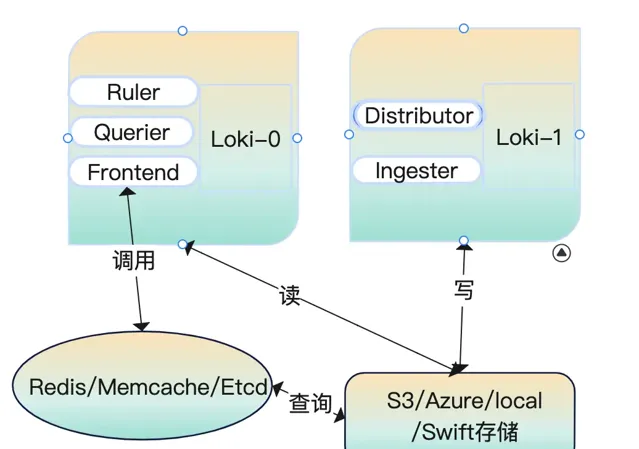

read/write(讀寫分離模式)

在讀寫分離模式下執行時

fronted-query

查詢會將流量轉發到read節點上。讀節點上保留了querier、ruler、fronted,寫節點上保留了distributor、ingester

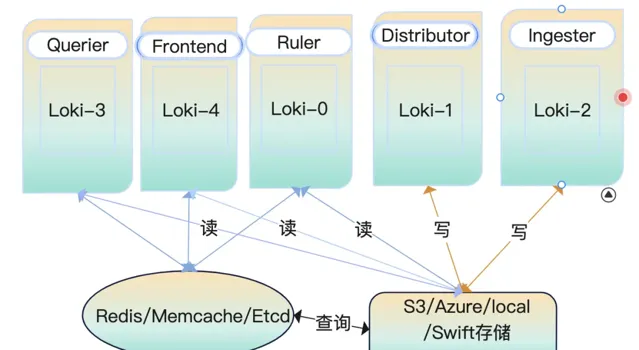

微服務模式執行

微服務模式執行下,透過不同的配置參數啟動為不同的角色,每一個行程都參照它的目標角色服務。

3、大顯身手之伺服端部署

上面我們講了那麽多關於loki的介紹和它的工作模式,你也一定期待它是怎麽部署的吧?!該怎麽部署、部署在哪裏、部署後怎麽使用等等問題都會出現在你的腦海裏。

在部署之前你需要準備好一個k8s集群才行哦。那好,接下來耐著性子往下看……

AllInOne部署模式

① k8s部署

我們從github上下載的程式是沒有配置檔的,需要提前將檔準備一份。這裏提供了一份完整的allInOne配置檔,部份內容進行了最佳化。

配置檔內容如下所示

auth_enabled: false

target: all

ballast_bytes: 20480

server:

grpc_listen_port: 9095

http_listen_port: 3100

graceful_shutdown_timeout: 20s

grpc_listen_address: "0.0.0.0"

grpc_listen_network: "tcp"

grpc_server_max_concurrent_streams: 100

grpc_server_max_recv_msg_size: 4194304

grpc_server_max_send_msg_size: 4194304

http_server_idle_timeout: 2m

http_listen_address: "0.0.0.0"

http_listen_network: "tcp"

http_server_read_timeout: 30s

http_server_write_timeout: 20s

log_source_ips_enabled: true

# http_path_prefix如果需要更改,在推播日誌的時候字首都需要加指定的內容

# http_path_prefix: "/"

register_instrumentation: true

log_format: json

log_level: info

distributor:

ring:

heartbeat_timeout: 3s

kvstore:

prefix: collectors/

store: memberlist

# 需要提前建立好consul集群

# consul:

# http_client_timeout: 20s

# consistent_reads: true

# host: 127.0.0.1:8500

# watch_burst_size: 2

# watch_rate_limit: 2

querier:

engine:

max_look_back_period: 20s

timeout: 3m0s

extra_query_delay: 100ms

max_concurrent: 10

multi_tenant_queries_enabled: true

query_ingester_only: false

query_ingesters_within: 3h0m0s

query_store_only: false

query_timeout: 5m0s

tail_max_duration: 1h0s

query_scheduler:

max_outstanding_requests_per_tenant: 2048

grpc_client_config:

max_recv_msg_size: 104857600

max_send_msg_size: 16777216

grpc_compression: gzip

rate_limit: 0

rate_limit_burst: 0

backoff_on_ratelimits: false

backoff_config:

min_period: 50ms

max_period: 15s

max_retries: 5

use_scheduler_ring: true

scheduler_ring:

kvstore:

store: memberlist

prefix: "collectors/"

heartbeat_period: 30s

heartbeat_timeout: 1m0s

# 預設第一個網卡的名稱

# instance_interface_names

# instance_addr: 127.0.0.1

# 預設server.grpc-listen-port

instance_port: 9095

frontend:

max_outstanding_per_tenant: 4096

querier_forget_delay: 1h0s

compress_responses: true

log_queries_longer_than: 2m0s

max_body_size: 104857600

query_stats_enabled: true

scheduler_dns_lookup_period: 10s

scheduler_worker_concurrency: 15

query_range:

align_queries_with_step: true

cache_results: true

parallelise_shardable_queries: true

max_retries: 3

results_cache:

cache:

enable_fifocache: false

default_validity: 30s

background:

writeback_buffer: 10000

redis:

endpoint: 127.0.0.1:6379

timeout: 1s

expiration: 0s

db: 9

pool_size: 128

password: 1521Qyx6^

tls_enabled: false

tls_insecure_skip_verify: true

idle_timeout: 10s

max_connection_age: 8h

ruler:

enable_api: true

enable_sharding: true

alertmanager_refresh_interval: 1m

disable_rule_group_label: false

evaluation_interval: 1m0s

flush_period: 3m0s

for_grace_period: 20m0s

for_outage_tolerance: 1h0s

notification_queue_capacity: 10000

notification_timeout: 4s

poll_interval: 10m0s

query_stats_enabled: true

remote_write:

config_refresh_period: 10s

enabled: false

resend_delay: 2m0s

rule_path: /rulers

search_pending_for: 5m0s

storage:

local:

directory: /data/loki/rulers

type: configdb

sharding_strategy: default

wal_cleaner:

period: 240h

min_age: 12h0m0s

wal:

dir: /data/loki/ruler_wal

max_age: 4h0m0s

min_age: 5m0s

truncate_frequency: 1h0m0s

ring:

kvstore:

store: memberlist

prefix: "collectors/"

heartbeat_period: 5s

heartbeat_timeout: 1m0s

# instance_addr: "127.0.0.1"

# instance_id: "miyamoto.en0"

# instance_interface_names: ["en0","lo0"]

instance_port: 9500

num_tokens: 100

ingester_client:

pool_config:

health_check_ingesters: false

client_cleanup_period: 10s

remote_timeout: 3s

remote_timeout: 5s

ingester:

autoforget_unhealthy: true

chunk_encoding: gzip

chunk_target_size: 1572864

max_transfer_retries: 0

sync_min_utilization: 3.5

sync_period: 20s

flush_check_period: 30s

flush_op_timeout: 10m0s

chunk_retain_period: 1m30s

chunk_block_size: 262144

chunk_idle_period: 1h0s

max_returned_stream_errors: 20

concurrent_flushes: 3

index_shards: 32

max_chunk_age: 2h0m0s

query_store_max_look_back_period: 3h30m30s

wal:

enabled: true

dir: /data/loki/wal

flush_on_shutdown: true

checkpoint_duration: 15m

replay_memory_ceiling: 2GB

lifecycler:

ring:

kvstore:

store: memberlist

prefix: "collectors/"

heartbeat_timeout: 30s

replication_factor: 1

num_tokens: 128

heartbeat_period: 5s

join_after: 5s

observe_period: 1m0s

# interface_names: ["en0","lo0"]

final_sleep: 10s

min_ready_duration: 15s

storage_config:

boltdb:

directory: /data/loki/boltdb

boltdb_shipper:

active_index_directory: /data/loki/active_index

build_per_tenant_index: true

cache_location: /data/loki/cache

cache_ttl: 48h

resync_interval: 5m

query_ready_num_days: 5

index_gateway_client:

grpc_client_config:

filesystem:

directory: /data/loki/chunks

chunk_store_config:

chunk_cache_config:

enable_fifocache: true

default_validity: 30s

background:

writeback_buffer: 10000

redis:

endpoint: 192.168.3.56:6379

timeout: 1s

expiration: 0s

db: 8

pool_size: 128

password: 1521Qyx6^

tls_enabled: false

tls_insecure_skip_verify: true

idle_timeout: 10s

max_connection_age: 8h

fifocache:

ttl: 1h

validity: 30m0s

max_size_items: 2000

max_size_bytes: 500MB

write_dedupe_cache_config:

enable_fifocache: true

default_validity: 30s

background:

writeback_buffer: 10000

redis:

endpoint: 127.0.0.1:6379

timeout: 1s

expiration: 0s

db: 7

pool_size: 128

password: 1521Qyx6^

tls_enabled: false

tls_insecure_skip_verify: true

idle_timeout: 10s

max_connection_age: 8h

fifocache:

ttl: 1h

validity: 30m0s

max_size_items: 2000

max_size_bytes: 500MB

cache_lookups_older_than: 10s

# 壓縮碎片索引

compactor:

shared_store: filesystem

shared_store_key_prefix: index/

working_directory: /data/loki/compactor

compaction_interval: 10m0s

retention_enabled: true

retention_delete_delay: 2h0m0s

retention_delete_worker_count: 150

delete_request_cancel_period: 24h0m0s

max_compaction_parallelism: 2

# compactor_ring:

frontend_worker:

match_max_concurrent: true

parallelism: 10

dns_lookup_duration: 5s

# runtime_config 這裏沒有配置任何資訊

# runtime_config:

common:

storage:

filesystem:

chunks_directory: /data/loki/chunks

fules_directory: /data/loki/rulers

replication_factor: 3

persist_tokens: false

# instance_interface_names: ["en0","eth0","ens33"]

analytics:

reporting_enabled: false

limits_config:

ingestion_rate_strategy: global

ingestion_rate_mb: 100

ingestion_burst_size_mb: 18

max_label_name_length: 2096

max_label_value_length: 2048

max_label_names_per_series: 60

enforce_metric_name: true

max_entries_limit_per_query: 5000

reject_old_samples: true

reject_old_samples_max_age: 168h

creation_grace_period: 20m0s

max_global_streams_per_user: 5000

unordered_writes: true

max_chunks_per_query: 200000

max_query_length: 721h

max_query_parallelism: 64

max_query_series: 700

cardinality_limit: 100000

max_streams_matchers_per_query: 1000

max_concurrent_tail_requests: 10

ruler_evaluation_delay_duration: 3s

ruler_max_rules_per_rule_group: 0

ruler_max_rule_groups_per_tenant: 0

retention_period: 700h

per_tenant_override_period: 20s

max_cache_freshness_per_query: 2m0s

max_queriers_per_tenant: 0

per_stream_rate_limit: 6MB

per_stream_rate_limit_burst: 50MB

max_query_lookback: 0

ruler_remote_write_disabled: false

min_sharding_lookback: 0s

split_queries_by_interval: 10m0s

max_line_size: 30mb

max_line_size_truncate: false

max_streams_per_user: 0

# memberlist_conig模組配置gossip用於在分發伺服器、攝取器和查詢器之間發現和連線。

# 所有三個元件的配置都是唯一的,以確保單個共享環。

# 至少定義了1個join_members配置後,將自動為分發伺服器、攝取器和ring 配置memberlist型別的kvstore

memberlist:

randomize_node_name: true

stream_timeout: 5s

retransmit_factor: 4

join_members:

- 'loki-memberlist'

abort_if_cluster_join_fails: true

advertise_addr: 0.0.0.0

advertise_port: 7946

bind_addr: ["0.0.0.0"]

bind_port: 7946

compression_enabled: true

dead_node_reclaim_time: 30s

gossip_interval: 100ms

gossip_nodes: 3

gossip_to_dead_nodes_time: 3

# join:

leave_timeout: 15s

left_ingesters_timeout: 3m0s

max_join_backoff: 1m0s

max_join_retries: 5

message_history_buffer_bytes: 4096

min_join_backoff: 2s

# node_name: miyamoto

packet_dial_timeout: 5s

packet_write_timeout: 5s

pull_push_interval: 100ms

rejoin_interval: 10s

tls_enabled: false

tls_insecure_skip_verify: true

schema_config:

configs:

- from: "2020-10-24"

index:

period: 24h

prefix: index_

object_store: filesystem

schema: v11

store: boltdb-shipper

chunks:

period: 168h

row_shards: 32

table_manager:

retention_deletes_enabled: false

retention_period: 0s

throughput_updates_disabled: false

poll_interval: 3m0s

creation_grace_period: 20m

index_tables_provisioning:

provisioned_write_throughput: 1000

provisioned_read_throughput: 500

inactive_write_throughput: 4

inactive_read_throughput: 300

inactive_write_scale_lastn: 50

enable_inactive_throughput_on_demand_mode: true

enable_ondemand_throughput_mode: true

inactive_read_scale_lastn: 10

write_scale:

enabled: true

target: 80

# role_arn:

out_cooldown: 1800

min_capacity: 3000

max_capacity: 6000

in_cooldown: 1800

inactive_write_scale:

enabled: true

target: 80

out_cooldown: 1800

min_capacity: 3000

max_capacity: 6000

in_cooldown: 1800

read_scale:

enabled: true

target: 80

out_cooldown: 1800

min_capacity: 3000

max_capacity: 6000

in_cooldown: 1800

inactive_read_scale:

enabled: true

target: 80

out_cooldown: 1800

min_capacity: 3000

max_capacity: 6000

in_cooldown: 1800

chunk_tables_provisioning:

enable_inactive_throughput_on_demand_mode: true

enable_ondemand_throughput_mode: true

provisioned_write_throughput: 1000

provisioned_read_throughput: 300

inactive_write_throughput: 1

inactive_write_scale_lastn: 50

inactive_read_throughput: 300

inactive_read_scale_lastn: 10

write_scale:

enabled: true

target: 80

out_cooldown: 1800

min_capacity: 3000

max_capacity: 6000

in_cooldown: 1800

inactive_write_scale:

enabled: true

target: 80

out_cooldown: 1800

min_capacity: 3000

max_capacity: 6000

in_cooldown: 1800

read_scale:

enabled: true

target: 80

out_cooldown: 1800

min_capacity: 3000

max_capacity: 6000

in_cooldown: 1800

inactive_read_scale:

enabled: true

target: 80

out_cooldown: 1800

min_capacity: 3000

max_capacity: 6000

in_cooldown: 1800

tracing:

enabled: true

註意:

ingester.lifecycler.ring.replication_factor

的值在單例項的情況下為1

ingester.lifecycler.min_ready_duration

的值為15s,在啟動後預設會顯示15秒將狀態變為ready

memberlist.node_name

的值可以不用設定,預設是當前主機的名稱

memberlist.join_members

是一個列表,在有多個例項的情況下需要添加各個節點的主機名/IP地址。在k8s裏面可以設定成一個service繫結到StatefulSets。

query_range.results_cache.cache.enable_fifocache

建議設定為false,我這裏設定成了true

instance_interface_names

是一個列表,預設的為["en0","eth0"],可以根據需要設定對應的網卡名稱,一般不需要進行特殊設定。

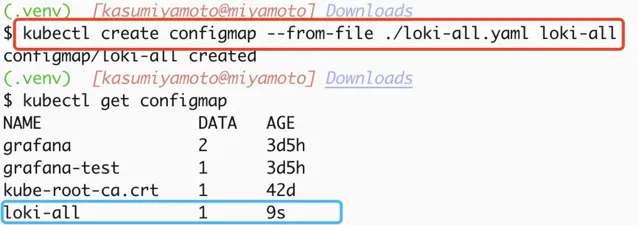

建立configmap

說明:將上面的內容寫入到一個檔——>loki-all.yaml,把它作為一個configmap寫入k8s集群。可以使用如下命令建立:

kubectl create configmap --from-file ./loki-all.yaml loki-all

可以透過命令檢視到已經建立好的configmap,具體操作詳見下圖

建立持久化儲存

在k8s裏面我們的數據是需要進行持久化的。Loki收集起來的日誌資訊對於業務來說是至關重要的,因此需要在容器重新開機的時候日誌能夠保留下來。

那麽就需要用到pv、pvc,後端儲存可以使用nfs、glusterfs、hostPath、azureDisk、cephfs等20種支持型別,這裏因為沒有對應的環境就采用了hostPath方式。

apiVersion: v1

kind: PersistentVolume

metadata:

name: loki

namespace: default

spec:

hostPath:

path: /glusterfs/loki

type: DirectoryOrCreate

capacity:

storage: 1Gi

accessModes:

- ReadWriteMany

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: loki

namespace: default

spec:

accessModes:

- ReadWriteMany

resources:

requests:

storage: 1Gi

volumeName: loki

建立套用

準備好k8s的StatefulSet部署檔後就可以直接在集群裏面建立套用了。

apiVersion: apps/v1

kind: StatefulSet

metadata:

labels:

app: loki

name: loki

namespace: default

spec:

podManagementPolicy: OrderedReady

replicas: 1

selector:

matchLabels:

app: loki

template:

metadata:

annotations:

prometheus.io/port: http-metrics

prometheus.io/scrape: "true"

labels:

app: loki

spec:

containers:

- args:

- -config.file=/etc/loki/loki-all.yaml

image: grafana/loki:2.5.0

imagePullPolicy: IfNotPresent

livenessProbe:

failureThreshold: 3

httpGet:

path: /ready

port: http-metrics

scheme: HTTP

initialDelaySeconds: 45

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 1

name: loki

ports:

- containerPort: 3100

name: http-metrics

protocol: TCP

- containerPort: 9095

name: grpc

protocol: TCP

- containerPort: 7946

name: memberlist-port

protocol: TCP

readinessProbe:

failureThreshold: 3

httpGet:

path: /ready

port: http-metrics

scheme: HTTP

initialDelaySeconds: 45

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 1

resources:

requests:

cpu: 500m

memory: 500Mi

limits:

cpu: 500m

memory: 500Mi

securityContext:

readOnlyRootFilesystem: true

volumeMounts:

- mountPath: /etc/loki

name: config

- mountPath: /data

name: storage

restartPolicy: Always

securityContext:

fsGroup: 10001

runAsGroup: 10001

runAsNonRoot: true

runAsUser: 10001

serviceAccount: loki

serviceAccountName: loki

volumes:

- emptyDir: {}

name: tmp

- name: config

configMap:

name: loki

- persistentVolumeClaim:

claimName: loki

name: storage

---

kind: Service

apiVersion: v1

metadata:

name: loki-memberlist

namespace: default

spec:

ports:

- name: loki-memberlist

protocol: TCP

port: 7946

targetPort: 7946

selector:

kubepi.org/name: loki

---

kind: Service

apiVersion: v1

metadata:

name: loki

namespace: default

spec:

ports:

- name: loki

protocol: TCP

port: 3100

targetPort: 3100

selector:

kubepi.org/name: loki

在上面的配置檔中我添加了一些pod級別的安全策略,這些安全策略還有集群級別的

PodSecurityPolicy

,防止因為漏洞的原因造成集群的整個崩潰,關於集群級別的psp,可以詳見官方文件

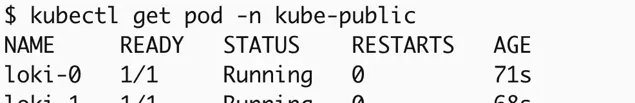

驗證部署結果

當看到上面的Running狀態時可以透過API的方式看一下分發器是不是正常工作,當顯示Active時正常才會正常分發日誌流到收集器(ingester)

② 裸機部署

將loki放到系統的/bin/目錄下,準備

grafana-loki.service

控制檔多載系統服務列表

[Unit]

Description=Grafana Loki Log Ingester

Documentation=https://grafana.com/logs/

After=network-online.target

[Service]

ExecStart=/bin/loki --config.file /etc/loki/loki-all.yaml

ExecReload=/bin/kill -s HUP $MAINPID

ExecStop=/bin/kill -s TERM $MAINPID

[Install]

WantedBy=multi-user.target

多載系統列表命令,可以直接系統自動管理服務:

systemctl daemon-reload

# 啟動服務

systemctl start grafana-loki

# 停止服務

systemctl stop grafana-loki

# 多載套用

systemctl reload grafana-loki

4、大顯身手之Promtail部署

部署客戶端收集日誌時也需要建立一個配置檔,按照上面建立伺服端的步驟建立。不同的是需要把日誌內容push到伺服端

① k8s部署

建立配置檔

server:

log_level: info

http_listen_port: 3101

clients:

- url: http://loki:3100/loki/api/v1/push

positions:

filename: /run/promtail/positions.yaml

scrape_configs:

- job_name: kubernetes-pods

pipeline_stages:

- cri: {}

kubernetes_sd_configs:

- role: pod

relabel_configs:

- source_labels:

- __meta_kubernetes_pod_controller_name

regex: ([0-9a-z-.]+?)(-[0-9a-f]{8,10})?

action: replace

target_label: __tmp_controller_name

- source_labels:

- __meta_kubernetes_pod_label_app_kubernetes_io_name

- __meta_kubernetes_pod_label_app

- __tmp_controller_name

- __meta_kubernetes_pod_name

regex: ^;*([^;]+)(;.*)?$

action: replace

target_label: app

- source_labels:

- __meta_kubernetes_pod_label_app_kubernetes_io_instance

- __meta_kubernetes_pod_label_release

regex: ^;*([^;]+)(;.*)?$

action: replace

target_label: instance

- source_labels:

- __meta_kubernetes_pod_label_app_kubernetes_io_component

- __meta_kubernetes_pod_label_component

regex: ^;*([^;]+)(;.*)?$

action: replace

target_label: component

- action: replace

source_labels:

- __meta_kubernetes_pod_node_name

target_label: node_name

- action: replace

source_labels:

- __meta_kubernetes_namespace

target_label: namespace

- action: replace

replacement: $1

separator: /

source_labels:

- namespace

- app

target_label: job

- action: replace

source_labels:

- __meta_kubernetes_pod_name

target_label: pod

- action: replace

source_labels:

- __meta_kubernetes_pod_container_name

target_label: container

- action: replace

replacement: /var/log/pods/*$1/*.log

separator: /

source_labels:

- __meta_kubernetes_pod_uid

- __meta_kubernetes_pod_container_name

target_label: __path__

- action: replace

regex: true/(.*)

replacement: /var/log/pods/*$1/*.log

separator: /

source_labels:

- __meta_kubernetes_pod_annotationpresent_kubernetes_io_config_hash

- __meta_kubernetes_pod_annotation_kubernetes_io_config_hash

- __meta_kubernetes_pod_container_name

target_label: __path__

用上面的內容建立一個configMap,方法同上

建立DaemonSet檔

Promtail是一個無狀態套用不需要進行持久化儲存只需要部署到集群裏面就可以了,還是同樣的準備DaemonSets建立檔。

kind: DaemonSet

apiVersion: apps/v1

metadata:

name: promtail

namespace: default

labels:

app.kubernetes.io/instance: promtail

app.kubernetes.io/name: promtail

app.kubernetes.io/version: 2.5.0

spec:

selector:

matchLabels:

app.kubernetes.io/instance: promtail

app.kubernetes.io/name: promtail

template:

metadata:

labels:

app.kubernetes.io/instance: promtail

app.kubernetes.io/name: promtail

spec:

volumes:

- name: config

configMap:

name: promtail

- name: run

hostPath:

path: /run/promtail

- name: containers

hostPath:

path: /var/lib/docker/containers

- name: pods

hostPath:

path: /var/log/pods

containers:

- name: promtail

image: docker.io/grafana/promtail:2.3.0

args:

- '-config.file=/etc/promtail/promtail.yaml'

ports:

- name: http-metrics

containerPort: 3101

protocol: TCP

env:

- name: HOSTNAME

valueFrom:

fieldRef:

apiVersion: v1

fieldPath: spec.nodeName

volumeMounts:

- name: config

mountPath: /etc/promtail

- name: run

mountPath: /run/promtail

- name: containers

readOnly: true

mountPath: /var/lib/docker/containers

- name: pods

readOnly: true

mountPath: /var/log/pods

readinessProbe:

httpGet:

path: /ready

port: http-metrics

scheme: HTTP

initialDelaySeconds: 10

timeoutSeconds: 1

periodSeconds: 10

successThreshold: 1

failureThreshold: 5

imagePullPolicy: IfNotPresent

securityContext:

capabilities:

drop:

- ALL

readOnlyRootFilesystem: false

allowPrivilegeEscalation: false

restartPolicy: Always

serviceAccountName: promtail

serviceAccount: promtail

tolerations:

- key: node-role.kubernetes.io/master

operator: Exists

effect: NoSchedule

- key: node-role.kubernetes.io/control-plane

operator: Exists

effect: NoSchedule

建立promtail套用

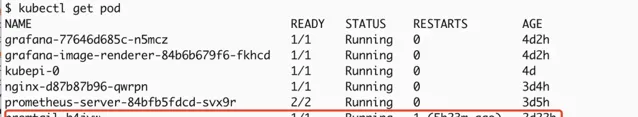

kubectl apply -f promtail.yaml

使用上面這個命令建立後就可以看到服務已經建立好了。接下來就是在Grafana裏面添加DataSource檢視數據了。

② 裸機部署

如果是裸機部署的情況下,需要對上面的配置檔做一下稍微的改動,更改clients得地址就可以,檔存放到

/etc/loki/

下,例如改成:

clients:

- url: http://ipaddress:port/loki/api/v1/push

添加系統開機啟動配置,service配置檔存放位置

/usr/lib/systemd/system/loki-promtail.service

內容如下

[Unit]

Description=Grafana Loki Log Ingester

Documentation=https://grafana.com/logs/

After=network-online.target

[Service]

ExecStart=/bin/promtail --config.file /etc/loki/loki-promtail.yaml

ExecReload=/bin/kill -s HUP $MAINPID

ExecStop=/bin/kill -s TERM $MAINPID

[Install]

WantedBy=multi-user.target

啟動方式同上面伺服端部署內容

5、Loki in DataSource

添加資料來源

具體步驟: Grafana->Setting->DataSources->AddDataSource->Loki

註意點:

http的URL地址,套用、服務部署在哪個namespace下,就需要指定它的FQDN地址,它的格式是

ServiceName.namespace

。如果預設在default下、建立的埠號是3100,就需要填寫為

http://loki:3100

,這裏為什麽不寫IP地址而寫成服務的名字,是因為在k8s集群裏面有個dns伺服器會自動解析這個地址。

尋找日誌資訊

6、其他客戶端配置

Logstash作為日誌收集客戶端

安裝外掛程式

在啟動Logstash後我們需要安裝一個外掛程式,可以透過這個命令安裝loki的輸出外掛程式,安裝完成之後可以在logstash的output中添加資訊。

bin/logstash-plugin install logstash-output-loki

添加配置進行測試

完整的logstash配置資訊,可以參考官網給出的內容LogstashConfigFile

output {

loki {

[url => "" | default = none | required=true]

[tenant_id => string | default = nil | required=false]

[message_field => string | default = "message" | required=false]

[include_fields => array | default = [] | required=false]

[batch_wait => number | default = 1(s) | required=false]

[batch_size => number | default = 102400(bytes) | required=false]

[min_delay => number | default = 1(s) | required=false]

[max_delay => number | default = 300(s) | required=false]

[retries => number | default = 10 | required=false]

[username => string | default = nil | required=false]

[password => secret | default = nil | required=false]

[cert => path | default = nil | required=false]

[key => path | default = nil| required=false]

[ca_cert => path | default = nil | required=false]

[insecure_skip_verify => boolean | default = false | required=false]

}

}

或者采用logstash的http輸出模組,配置如下:

output {

http {

format => "json"

http_method => "post"

content_type => "application/json"

connect_timeout => 10

url => "http://loki:3100/loki/api/v1/push"

message => '"message":"%{message}"}'

}

}

7、Helm安裝

如果你想簡便安裝的話,可以采用helm來安裝。helm將所有的安裝步驟都進行了封裝,簡化了安裝步驟。

對於想詳細了解k8s的人來說,helm不太適合。因為它封裝後自動執行,k8s管理員不知道各元件之間是如何依賴的,可能會造成誤區。

廢話不多說,下面開始helm安裝

添加repo源

helm repo add grafana https://grafana.github.io/helm-charts

更新源

helm repo update

部署

預設配置

helm upgrade --install loki grafana/loki-simple-scalable

自訂namespace

helm upgrade --install loki --namespace=loki grafana/loki-simple-scalable

自訂配置資訊

helm upgrade --install loki grafana/loki-simple-scalable --set"key1=val1,key2=val2,..."

8、故障解決方案

1.502 BadGateWay

loki的地址填寫不正確

在k8s裏面,地址填寫錯誤造成了502。檢查一下loki的地址是否是以下內容:

http://LokiServiceName

http://LokiServiceName.namespace

http://LokiServiceName.namespace:ServicePort

grafana和loki在不同的節點上,檢查一下節點間網路通訊狀態、防火墻策略

2.Ingester not ready: instance xx:9095 in state JOINING

耐心等待一會,因為是allInOne模式程式啟動需要一定的時間。

3.too many unhealthy instances in the ring

將

ingester.lifecycler.replication_factor

改為1,是因為這個設定不正確造成的。這個在啟動的時候會設定為多個復制源,但當前只部署了一個所以在檢視label的時候提示這個

4.Data source connected, but no labels received. Verify that Loki and Promtail is configured properly

promtail無法將收集到的日誌發送給loki,授權檢查一下promtail的輸出是不是正常

promtail在loki還沒有準備就緒的時候把日誌發送過來了,但loki沒有接收到。如果需要重新接收日誌,需要刪除positions.yaml檔,具體路徑可以用find尋找一下位置

promtail忽略了目標日誌檔或者配置檔錯誤造成的無法正常啟動

promtail無法在指定的位置發現日誌檔

官方文件:

https://kubernetes.io/docs/concepts/security/pod-security-policy

如喜歡本文,請點選右上角,把文章分享到朋友圈

如有想了解學習的技術點,請留言給若飛安排分享

因公眾號更改推播規則,請點「在看」並加「星標」 第一時間獲取精彩技術分享

·END·

相關閱讀:

作者:空x格

來源:juejin.cn/post/7150469420605767717

版權申明:內容來源網路,僅供學習研究,版權歸原創者所有。如有侵權煩請告知,我們會立即刪除並表示歉意。謝謝!

架構師

我們都是架構師!

關註 架構師(JiaGouX),添加「星標」

獲取每天技術幹貨,一起成為牛逼架構師

技術群請 加若飛: 1321113940 進架構師群

投稿、合作、版權等信箱: [email protected]